From Chaos to Clarity: Why NotebookLM is the Only Research Partner You Need in 2026

From Chaos to Clarity: Why NotebookLM is the Only Research Partner You Need in 2026

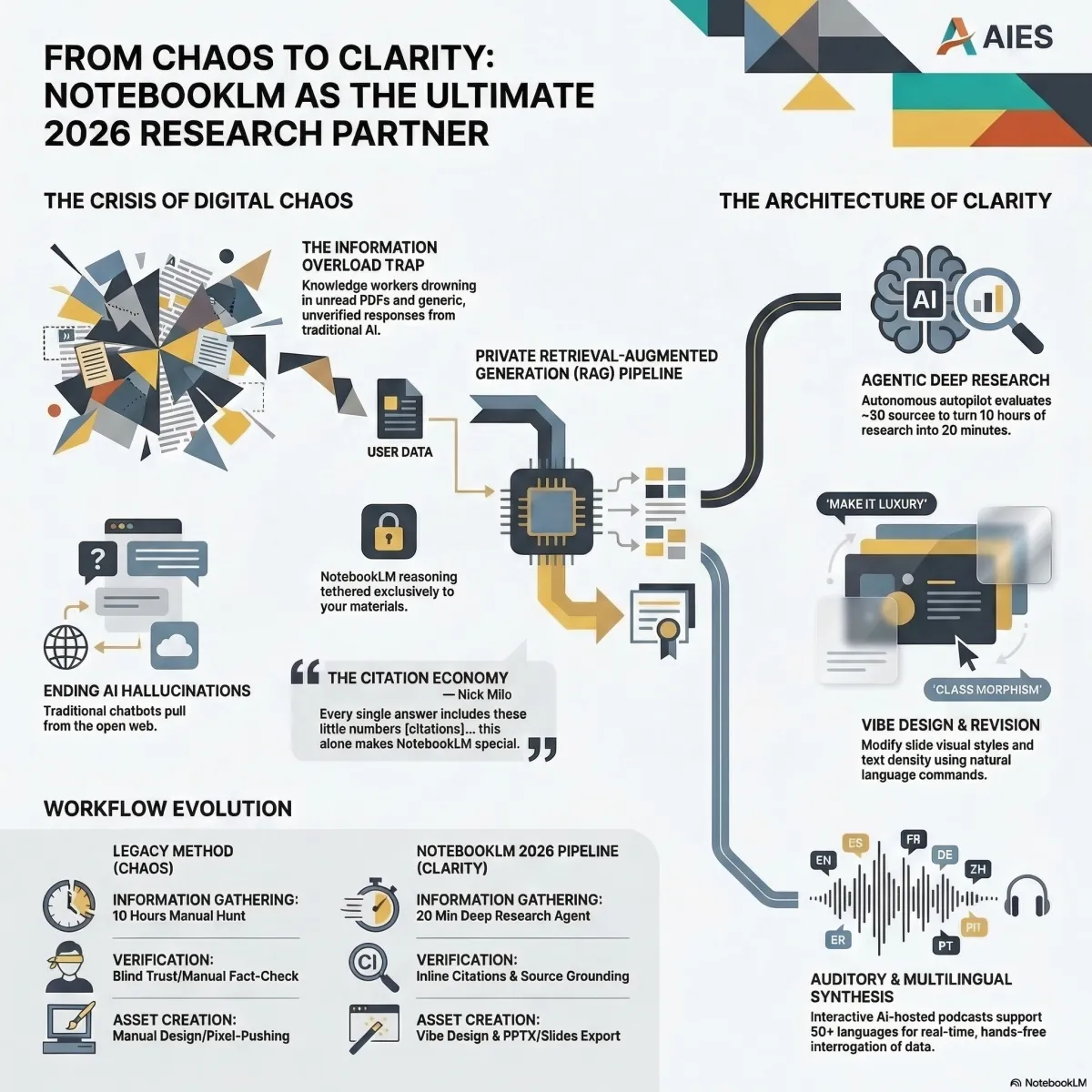

The Information Overload Crisis: Transitioning from Noise to Knowledge

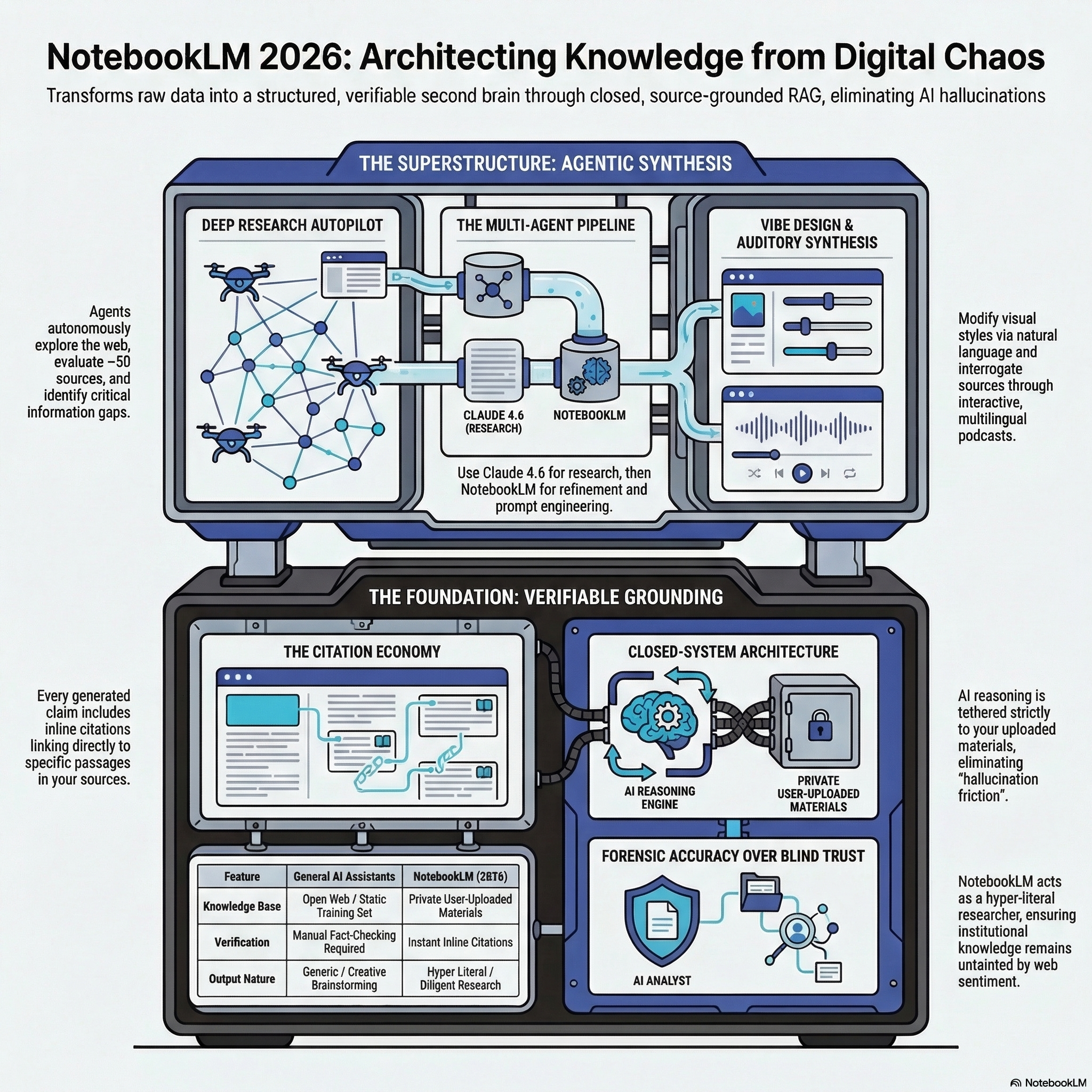

In 2026, the primary challenge for the knowledge worker is no longer the acquisition of information, but the management of digital chaos. We are drowning in a sea of unread PDFs, unindexed Zoom transcripts, and fragmented browser tabs. Traditional AI assistants have historically struggled with this volume, often acting as "magic boxes" that offer generic, unverified responses—or worse, confident hallucinations.

NotebookLM represents the architectural shift from general-purpose chatbots to personalized institutional knowledge hubs. It functions as a "closed system" that strictly adheres to the data you provide. By grounding the AI’s intelligence in a private Retrieval-Augmented Generation (RAG) pipeline, NotebookLM eliminates verification friction, transforming raw data into a structured, verifiable second brain.

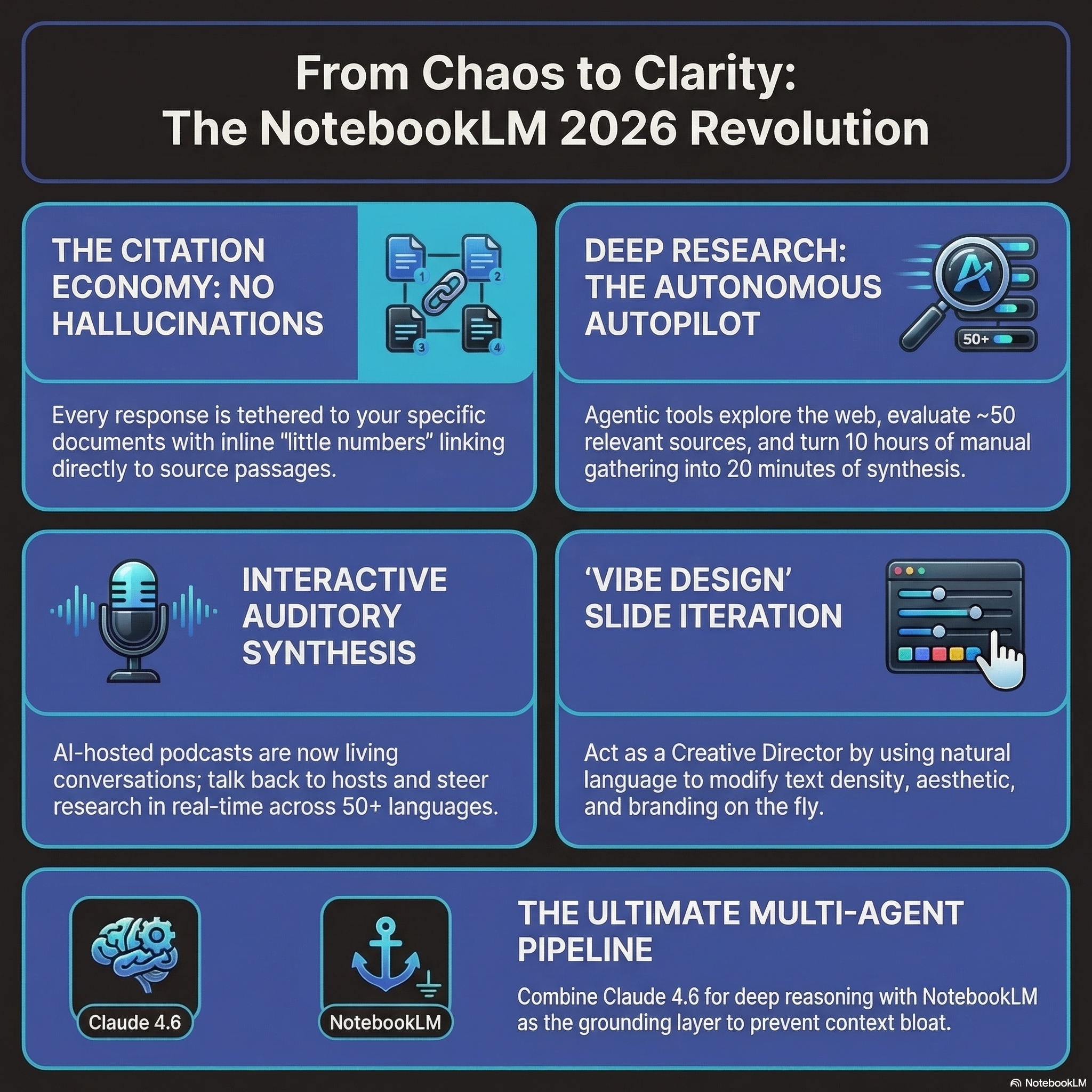

Strategic Pillar I: Verifiable Grounding & The Citation Economy

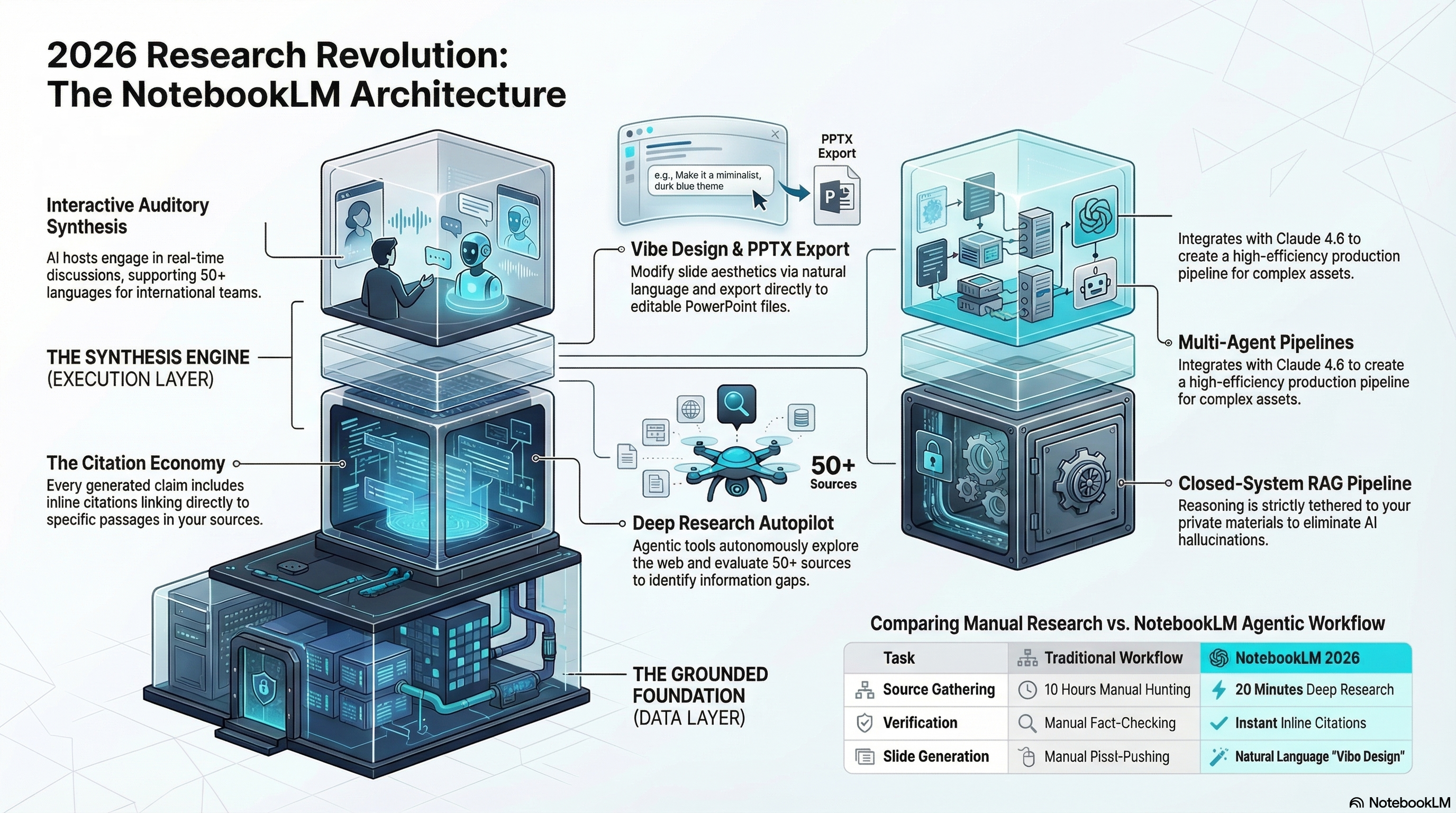

The fundamental value proposition of NotebookLM is its "Source-Grounded" architecture. Unlike standard Large Language Models (LLMs) like ChatGPT, which pull from a vast, static training set from the open web, NotebookLM’s reasoning is tethered to your specific materials.

This creates a transparent "paper trail." Every claim generated by the system is accompanied by inline citations—the "little numbers" that link directly to the specific passage in your uploaded PDFs, transcripts, or web links.

"Every single answer includes these little numbers... this alone makes NotebookLM quite special." — Nick Milo, Linking Your Thinking

Analysis: For researchers and strategists, this is a transition from blind trust to forensic accuracy. While other AI tools might summarize a topic based on general web sentiment, NotebookLM acts as a hyper-literal, diligent researcher, ensuring that your institutional knowledge remains untainted by AI hallucinations.

Infographic created using NotebookLM☝️

Strategic Pillar II: "Vibe Design" & Conversation-Driven Presentations

The most significant leap in the platform's visual capability is the Prompt-Based Revision feature for slide decks. Previously, AI-generated decks were static artifacts. Today, the user serves as a "Creative Director" rather than a manual designer.

Through "Vibe Design," users can modify slide visual styles, text density, and formatting using natural language. Commands like "Make it luxury aesthetic with glass morphism," "Change accent colors from orange to red," or "Summarize for an executive audience" allow for iterative design at the speed of thought.

Technical Note: While the platform now supports PPTX Export, an architect must note that these slides currently export as images rather than editable text. High-level refinement remains within the NotebookLM environment or via Google Slides overlays, moving the focus away from pixel-pushing toward strategic narrative direction.

Strategic Pillar III: Auditory Synthesis—The Interactive, Multilingual Podcast

NotebookLM’s Audio Overview has evolved from a simple text-to-speech output into a sophisticated synthesis engine. Two AI hosts engage in a nuanced discussion of your sources, distilling hours of reading into a coherent auditory brief.

The breakthrough for 2026 is the Interactive Mode. This is no longer a passive listening experience; users can grant microphone access to "talk back" to the hosts, steer the conversation in real-time, and ask clarifying questions. With support for over 50 languages, international teams can now process localized institutional data and receive summaries in their native tongue.

Analysis: This transforms static research into a "living conversation," allowing executives to absorb complex technical data during a commute while actively interrogating the sources through voice.

Strategic Pillar IV: Agentic Intelligence—The Deep Research Autopilot

The manual hunt for links and PDFs is a legacy workflow. NotebookLM addresses this through its Deep Research agentic tool. Unlike a keyword search, this agent autonomously explores the web, evaluates approximately 50 relevant sources, and identifies information gaps.

It produces a curated research report and automatically populates your notebook with the highest-utility sources, effectively turning 10 hours of manual information gathering into 20 minutes of high-level synthesis.

"This changes everything... research to refinement to prompt engineering to a finished product, all done with AI." — Julian Goldie, Julian Goldie SEO

Infographic created using NotebookLM☝️

Strategic Pillar V: The Multi-Agent Pipeline—Claude 4.6 + NotebookLM

For senior content strategists, the "insane" productivity gain comes from using NotebookLM as the grounding layer in a multi-tool pipeline involving Claude 4.6. This workflow creates a "Second Brain for AI Agents," preventing context bloat and saving tokens:

Research & Outline: Utilize the deep reasoning of Claude 4.6 to generate a comprehensive technical outline or research document.

Grounding & Refinement: Import that outline into NotebookLM to strip away "fluff" and ground the messaging in your private source data.

Prompt Engineering: Instruct NotebookLM to generate a high-converting, data-driven prompt based only on the refined sources.

Final Asset Production: Take that precision-engineered prompt back to Claude 4.6 to produce the final asset—whether it’s a landing page, a Security Handbook, or a Debugging Knowledge Base.

Analysis: Using one AI to write a grounded prompt for another produces results that generic prompting cannot match. It ensures the final output is surgically aligned with your specific institutional data.

Strategic Pillar VI: The "Compression Cycle" (The Pro Hack)

To bypass technical ceilings, power users employ the Compression Cycle. While the free tier is restricted to 50 sources, the paid tier allows for up to 300. For massive, multi-year projects, the workflow is as follows:

Populate a notebook with your maximum sources (50 or 300).

Generate a "Master Summary" note covering the entire knowledge base.

Use the "Convert to Source" feature to convert that synthesis into a single new source file.

Delete the original raw files to clear the slots.

Analysis: This cycle allows for "unlimited research capacity." You are no longer managing raw files; you are synthesizing layers of knowledge, effectively building a hierarchical intelligence system that grows in depth without hitting technical limits.

Strategic Pillar VII: Gemini 3 Integration—Universal Synthesis

While NotebookLM provides focused, siloed environments for specific topics, the integration with Gemini 3 breaks these silos. Within the Gemini interface, users can now attach multiple notebooks to a single chat session.

This enables cross-topic reasoning, such as comparing a technical RAG research notebook with a marketing analytics notebook. Furthermore, Gemini’s Canvas feature enables side-by-side iterative editing of scripts, reports, or code, grounded in the massive context of your collective NotebookLM library.

Infographic created using NotebookLM☝️

Conclusion: Your Digital Home for Thinking

NotebookLM is the optimal distillation layer for human wisdom in the AI age. We are moving away from treating AI as a "magic box" for ad hoc queries and toward an era of structured production pipelines in which data is the absolute foundation of every output.

In the future of work, value is created by those who can architect systems to turn institutional chaos into actionable intelligence.

In an age of infinite information, are you building a bank account of knowledge, or just surfing the noise?

Michael Carmine

Founder & CEO | AIEducationalSolutions.org